0 Web scraping – also referred to as either web harvesting or web data extraction – is the action of extracting large quantities of data from various websites and saved as a file in your computer or to a database in a spreadsheet or other table format.

When we browse the web, most websites can only be viewed by using an internet browsing application (Internet Explorer, Firefox, Chrome, etc.) and do not offer an option to save a copy of the website’s data for private purposes. In this case, the only thing left to do is to manually copy and paste the data. As you may already know, going about this task manually is labor-intensive and can take you many hours or days to complete, depending on how much data you need to compile.

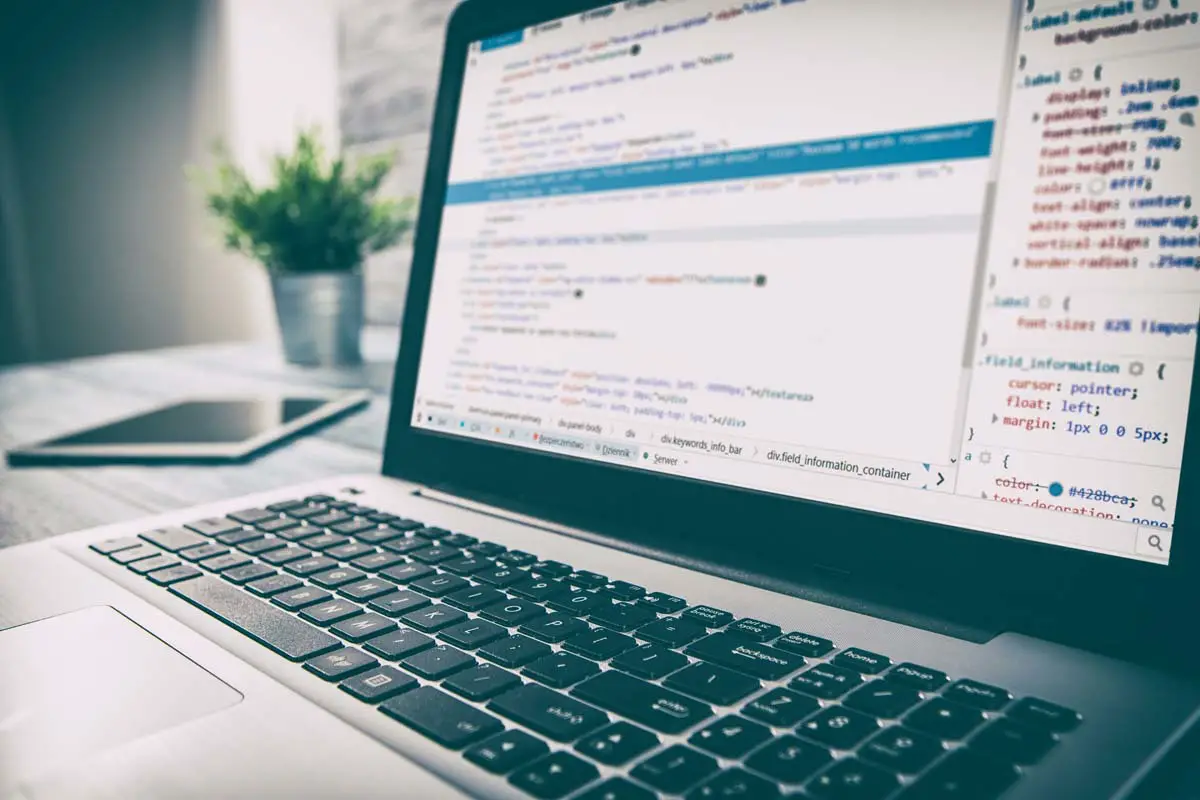

Web scraping simplifies the task for us by automating the process, so instead of sitting in front a computer all day and copy-pasting website data into a spreadsheet, Web Scraping scripts will do the task for us and complete it in only a fraction of the time. In this tutorial, we’re going to take learn the basics of automating and scraping the web using JavaScript. To get this done, we’re going to need Puppeteer, a Node library API which allows us to use headless Chrome. Headless Chrome is basically a method of running chrome without actually running it. In a nutshell, we’ll be writing JavaScript code that will run Chrome for us automatically.

Prerequisites

Before we begin, take a moment to make sure that you have the following technologies ready on your machine:

- NodeJS

- ExpressJS: infamous node framework everyone uses

- Request: makes the HTTP calls

- Cheerio: traverses the DOM and extracts data

Setup

The simple is pretty straightforward. After getting familiar with Node.js and all its intricacies, you can go ahead and include Express, Request, and Cheerio into your setup as the dependencies. The following lines of code will set up of the dependencies:

{

“name” : “node-web-scrape”,

“version” : “0.0.1”,

“description” : “Scrape le web.”,

“main” : “server.js”,

“author” : “authorsname”,

“dependencies” : {

“express” : “latest”,

“request” : “latest”,

“cheerio” : “latest”,

}

}

With the .json file ready to run, install the dependencies with:

npm install

Now let’s see what our setup will be making. In this guide, we’ll make a singular request to IMDB to get:

- Movie title

- Year of its release

- IMDB community score

After compiling this information, we’ll save it into a .json file on your computers.

Application

Our web scraping application will be very simplistic. The things it’ll do include:

- Launch web server

- Visit a website on our server which will activate the web scraper

- The web scraper will request information from the website that it wants to extract

- The request will record the HTML of the website and save it into our server

- We will traverse the DOM and take the requested information

- The extracted data will be formatted into the form we need

- Finally, the formatted information will be saved into a .json file on our computers

Now let’s set the logic in our server.js file:

var express = require(‘express’);

var fs = require(‘fs’);

var request = require(‘request’);

var cheerio = require(‘cheerio’);

var app = express();

app.get(‘/scrape’, function(req, res){

//Web scraping processes will be done here

})

app.listen(‘8081’)

console.log(‘Done on port 8081’);

exports = module.exports = app;

Requesting Information

Now that the application is ready to run, we’ll have to make the request to external URLS containing the information we want to scrape:

var express = require(‘express’);

var fs = require(‘fs’);

var request = require(‘request’);

var cheerio = require(‘cheerio);

var app = express();

app.get(‘/scrape’, function(req, res){

//The website we want to scrape info from – The Wolf of Wall Street (2013)

url = ‘http://www.imdb.com/title/tt0993846/’;

//Structure of request

//First parameter is our URL

//Callback function needs 3 parameters, an error, response status code and the html

request(url, function(error, response, html){

//Checking to make sure no errors occur

If(!error){

//Utilize cheerio library on the returned HTML

Var $ = cheerio.load(html);

//Lastly, define the variables we want to take

var title, release, rating;

var json = {title : “”, release : “”, rating : “”};

}

})

})

app.listen(‘8081’)

console.log(‘Done on port 8081’);

exports = module.exports = app;

The request function requires two parameters (URL and a callback). For the URL, we set the link of the IMDB movie. In the callback, we’ll take three parameters (error, response and html).

Traversing the DOM

First, we’ll need the movie title. Open the IMDB website for the movie, open Developer Tools and check the movie title element. We’re looking for a unique element which will assist in locating the title of the movie (header). Search for <hl class=”header”>.

var express = require(‘express’);

var fs = require(‘fs’);

var request = requite(‘request’);

var cheerio = require(‘cheerio’);

var app = express();

app.get(‘/scrape, function(req, res){

url = ‘http://www.imdb.com/title/tt0993846/’;

request(url, function(error, response, html){

if(!error){

var $ = cheerio.load(html);

var title, release, rating;

var json = { title : “”, release : “”, rating : “”};

//Using unique header as a starting pint

$(‘.header).filter(function(){

//Store filtered data into a variable

var data = $(this);

//Title rests within the first child element of header tag

//Utilize jQuery for easy navigation

title = data.children().first().text();

//After getting the title, store it to json object

json.title = title;

})

}

})

})

app.listen(‘8081’)

console.log(‘Done on port 8081’);

exports = module.exports = app;

Next we’ll need the release year of the movie. We’ll need to repeat the process, this time finding a unique element in the DOM for release year (<h1> tag).

var express = require(‘express’);

var fs = require(‘fs’);

var request = require(‘request’);

var cheerio = require(‘cheerio’);

var app = express();

app.get(‘/scrape’, fuction(req, res){

url = ‘http://www.imdb.com/title/tt0993846/’;

request(url, function(error, response, html){

if(!error){

var $ = cheerio.load(html);

var title, release, rating;

var json = { title : “”, release : “”, rating : “”};

$(‘.header’).filter(function(){

var data = $(this);

title = data.children().first().text();

//Repeat the same process but for release year

//Find exact location of release year

release = data.children().last().children().text();

json.title = title;

//Extract and save to json object

json.release = release;

})

})

})

})

app.listen(‘8081’)

console.log(‘Done on port 8081’);

exports = module.exports = app;

Finally, we repeat the process for community rating. The unique class name is .star-box-giga-star.

var express = require(‘express’);

var fs = require(‘fs’);

var request = require(‘request’);

var cheerio = require(‘cheerio’);

var app = express();

app.get(‘/scrape’, function(req, res){

url = ‘http://www.imdb.com/title/tt0993846/’;

request(url, function(error, response, html){

if(!error){

var $ = cheerior.load(html);

var title, release, rating;

var json = { title : “”, release : “”, rating : “”};

$(‘.header’).filter(function(){

var data = $(this);

title = data.children().first().text();

release = data.children().last().children().text();

json.title = title;

json.release = release;

})

//Community rating is found in a separate section of the DOM so write

new jQuery

$(‘.star-box-giga-star’).filter(function(){

var data = $(this);

//.star-box-giga-star class exactly where we wanted

//To get the rating, simply get the text

rating = data.text();

json.rating = rating;

})

)

})

})

app.listen(‘8081)

console.log(‘Done on port 8081’);

export = module.exports = app

That’s all there is to retrieving information from the website. In a nutshell, the steps mentioned above are:

- Fine unique element or attribute on the DOM to assist in singling out data

- If no unique element exists on a certain tag, then find the closest tag as your starting point

- If necessary, traverse the DOM

Formatting and Extracting

Not that we’ve successfully extracted the information, it’s time to format it to a project folder. Everything has been stored to a variable named json. If you’re unfamiliar with what the fs library is for, it gives access to our computer’s file system. The following code will write the files to the file system:

var express = require(‘express’);

var fs = require(‘fs’);

var request = require(‘request’);

var cheerio = require(‘cheerio);

var app = express();

app.get (‘/scrape’, function(req, res){

url = ‘http://www.imdb.com/title/tt0993846/’;

request(url, function(error, response, html){

if(!error){

var $ = cheerio.load(html);

var title, release, rating

var json = { title : “”, release : “”, rating : “”};

$(.header’).filter(function(){

var data = $(this);

title = data.children().first().text();

release = data.children().last().children().text();

json.title = title;

json.release = release;

})

$(‘.star-box-giga-star’).filter(function(){

var data = $(this);

rating = data.text();

json.rating = rating;

})

}

//Use default ‘fs’ library to write to the system

//Pass 3 parameters to the writeFile function

//Parameter 1: output.json – filename

//Parameter 2: JSON.stringify(json, null, 4) – data to write, JSON.stringify makes JSON easier to read

//Parameter 3: callback function – tells the status of function

fs.writeFile(‘output.json’, JSON.stringify(json, null, 4), function(err){

console.log(‘File successfully recorded. Check project directory for the output .json file’);

})

//Finally, send a message to your browser to remind you that the app has no UI

res.send(‘Check console’)

});

})

app.listen(‘8081)

console.log(‘Done on port 8081’);

exports = module.exports = app;

With the node, we are ready to scrape and store the extracted information. To start up the node server, open http://localhost:8081/scrape and see what happens.