- Home

- Web scraping

Data Mining Jobs for Pros

With the rise in e-commerce and the field of IT, we have seen a huge increase in data that is out there for organizations to just pick up. This has resulted in the creation of data mining, which requires data miners to use their technical skills to mine the data in the online world. While the field is still growing, it is part of computer science and considered to be on the business intelligence side of it. It is a major part of computer science as the data collected by data miners allows them to provide predictions for businesses on demand of products, services, along with the human resource talent. So many big businesses have started to employ data miners because they can help enhance their business.

Types of jobs related to data mining for profs

The most popular position in the field of data mining is that of an analyst. A data mining analyst is sought by a wide variety of industries. Their main job is to analyze data to help the industry to further enhance their business by identifying data sources, predicting patterns in the industry, synthesizing the data set, and presenting the information in an easy to understand manner to the organization that will help with their decision making. Data mining analyst is fairly popular in education, engineering, and government services.

Data engineer is the second most popular position in the data mining field. They work more like the traditional researcher and business analyst. With the collection of data, they can help identify problems for businesses, work out improvement for products and services, and tell organizations what their business requirements are.

The final job that is popular in data mining is that of a big data architect. They don’t really work on the collections and analysis of data but rather focus on the strategic plan and design of data. They design the IT system which basically allows for analysts and engineer to easily collect the data they need for their job.

Future of data mining

Since 2010, data mining has become a relevant field as businesses have realized how it can help transform their businesses and presents them with a chance to rise above their competition. The demand for a data analyst, engineers, and architect has been on a rise ever since as you see industries from IT firms to fashion utilize the help of these individuals to enhance their business.

Selenium Web Scraping Tutorial

Web scraping allows you to extract data from websites. The process is automatic in which the HTML is processed to extract data that can be manipulated and converted to the format of your liking for retrieval and or analysis. The process is commonly used for data mining.

What is Selenium?

Selenium is an automation tool for web browsers. It is primarily used for testing of websites, allowing you to test it before you put it live. It gives you the chance to perform the following tasks on the website:

- Click buttons

- Enter information within the website, forms

- Search for information on the website

It is a tool that has been used for scraping website. But you must note that if you scrape a website too often, you risk the chance of having your IP banned from the website so approach with caution.

How to scrape with Selenium?

In order to scrape websites with Selenium you will need Python, either Python3.x. or Python2.x. Once you have that downloaded you will need the following driver and package:

Selenium package – allows you to interact with website from Python

Chrome Driver – a platform to perform and launch tasks on browser

Virtualenv – helps create an isolated Python environment

- In Python, you need to create a new project. You can create a file and name it setup.py and within it type in selenium as dependency.

- Then open the command line and you will need to create a virtual environment by typing the following command: $ virtualenv webscraping_example

- You will now need to run the dependency on virtualenv, you can do this by typing the following command in the terminal: $(webscraping_example) pip install -r setup.py

- Now going back to the folder in Python, create another file and you can name it, webscraping_example.py. Once done, you need to add the following code snippets:

- from selenium import webdriver

- from selenium.webdriver.common.by import By

- from selenium.webdriver.support.ui import WebDriverWait

- from selenium.webdriver.support import expected_conditions as EC

- from selenium.common.exceptions import TimeoutException

- You then need to put Chrome in Incognito mode, this is done in the webdriver by adding the incognito argument:

- option = webdriver.ChromeOptions()

- option.add_argument(“ — incognito”)

- You will then create a new instance with this code: browser = webdriver.Chrome(executable_path=’/Library/Application Support/Google/chromedriver’, chrome_options=option)

- You can now start making request you pass in the website url you want to scrape.

- You may need to create a user account with Github to do this but that is an easy process.

- You are now ready to scrape the data from the website.

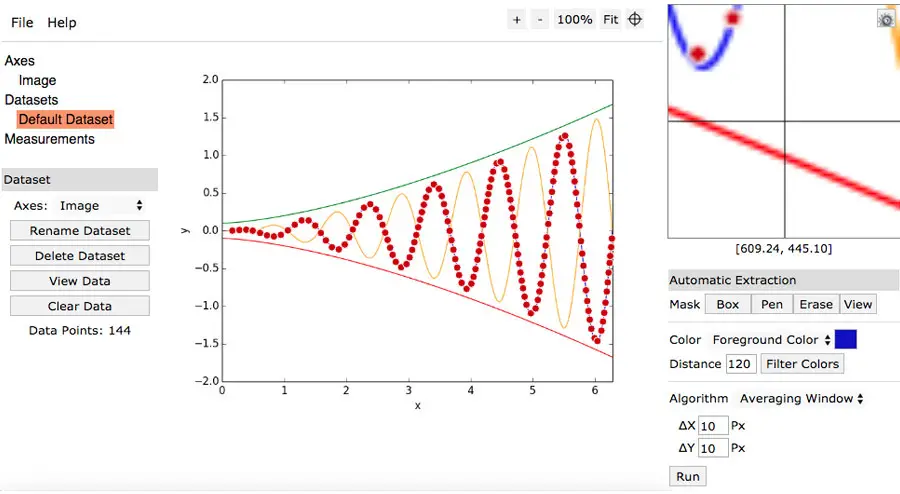

WebPlotDigitizer Review

Our rating: 4.4 out of 5

Pros

- Makes working with image graphs much easier

- Fairly easy to use, once you get the hang of it

Cons

- Not the most user-friendly software

- Very dull design

WebPlotDigitizer is not a program that just anyone can use or even a one that you may need. However, if you work with a graph or are an engineer, it is definitely a program you should consider using. The program isn’t too old but has been out for enough years that it has a bit of following.

The program will not be something you use every day, but it is nice to have in your reserve for the times you need it. It does make your work a lot easier. If you work with graphs on a daily basis then this software is definitely one you should download. WebPlotDigitizer helps easily digitize image graphs into numerical data. You can work with any type of graph or map from bar to ternary diagram and it will extract the data for you to easily analyze.

So how does it work?

You should know that the program is not completely automatic, don’t expect to take a picture and all the data will appear. You can import the graph in form of an image, then you need to select specific points on the line and then go over the line so the points can be picked up by the program.

Is it easy to use?

Well, you don’t need to be a rocket scientist to use the program. It isn’t difficult to use but you will need to be a bit tech savvy to use the program. It took us a few tries to get the graph to properly digitalize the data but once you get the hang of it, it is fairly easy to use.

If you deal with a lot of graphs and want a program that can help you digitize the data with a bit of input from your end. It does make the process a lot easier if you have a graph in image format and need to extract the data. If not, then there is no point of having the software.

Data Mining Methods for Beginners

The term data mining has become so widely used that we are most likely going to find at least one shared article on the topic in our social media news feed. In fact, the extent of its overuse has often led to misunderstandings of what it is or they are explained in a difficult-to-understand manner that, in the end, we might as well be reading gibberish.

Technically, data mining is the process of finding certain information from a compilation of data and presenting the usable information in the hopes of resolving a specific problem. In a nutshell, data mining is the act of examining large database sets to create new information. There are different services involved in the process, such as text mining, web mining, audio and video mining, visual data mining, and social network data mining.

There are many major data mining techniques in development, and recent data mining projects include association, clustering, prediction, sequential patterns and decision tree. This guide will provide a brief examination of each of these techniques.

Association

Association is perhaps one of the more popular data mining techniques used today. In association, the user is attempting to discover a pattern based on uncovered links between items of a singular transaction. This is the reason why the association technique is also commonly referred to as relation technique. This technique is widely used in market basket analysis with the aim of identifying a set of products that consumers frequently purchase in one transaction.

Retail companies use the association technique to study the psychological decision-making process behind their customer’s purchases. For example, when looking at past sales data, companies might discover that customers who buy chips will also buy beer. Therefore, the company will put beers and chips in the same shopping aisle or in relatively close distances from one another. This could be a way of efficient shopping for customers and ultimately increase sales.

Classification

Classification is a traditional data mining technique based on machine learning. Essentially, this technique is utilized for classifying each item in a dataset into one single predefined set of groups. The classification technique uses mathematical approaches such as decision trees, linear programming, statistics and neural network.

In this technique, the user develops software to learn how to organize items into groups. For instance, classification technique can be applied in the application that “looking at records of employees who have left the company, predict who will leave the company next.” In this case, we separate records of employees into two classes named “remain” and “gone.” Our data mining software then classifies the employees into their groups based on their probability of exiting the company.

Clustering

In data mining, clustering refers to the process of categorizing a particular set of objects by looking at their characteristics and separating them based on their similarities. The clustering technique sets the classes and places each object in their respective class, whereas in the classification technique, objects are assigned into predefined categories.

To make things clearer, let’s look at the example of a library’s book management system. In a library, there is a large selection of books on a number of topics. The posing challenge is how to organize the books in a way so visitors can pick up several books on a certain topic without having to walk around the whole library. With the clustering technique, one cluster – or in this case, shelf – contains all books that are about a particular topic, and the cluster is given a meaningful, understandable name. If readers need to take a book on that topic, they just have to head to the aisle where those books are located instead of searching the entire building.

Prediction

As the name suggest, the prediction technique aims to discover the link between independent variables and define the relationship between independent and dependent variables. For example, this technique is can be used to predict future profits if sales are set as the independent variable and profits as the dependent variable. Using past sale and profit data, the user can draw a regression curve for predicting profits.

Sequential Patterns

Sequential patterns analysis aims to uncover or identify common patterns, regular events or trends in transactions data over a certain period. In sales, using past transactions data, a business can find a set of items that their customers purchase in one visit during certain months or seasons. Businesses use this information to offer better deals or discounts based on historical purchasing frequency.

Decision trees

A decision tree is one of the most widely used forms of data mining due to its model’s simplicity and understandability. With the decision tree technique, the root of the tree is a question or condition that can have multiple responses. Each response then leads to a set of questions or conditions that help in determining the data so the user can make a better final decision. For example, we can look at the following sequence of questions and answers and make a decision of whether we want to play basketball outdoors or indoors:

- Outlook → Is it sunny? → If so, the how humid is it? → If high humidity, then I’ll play indoors → If low humidity, then I’ll play outdoors

- Outlook → Is it raining? → If not, how windy is it? → If high winds, then I’ll play indoors → If low winds, then I’ll play outdoors

- Outlook → Is there overcast? → If so, then I’ll play outdoors

Beginning at the root node, if the outlook is overcast then I will play basketball outdoors. If it’s raining, I’ll only play basketball outdoors only if it’s not windy. And if the sun is out and shining, I will only play basketball outdoors if it’s not too humid.

These are the six basic techniques used in data mining. Though some of them may appear to be similar in practice, they all have different aims in terms of data collection. We are free to two or more data mining technique simultaneously to form a process that meets what a business’ needs.

Guide to Web Scraping with JavaScript

Web scraping – also referred to as either web harvesting or web data extraction – is the action of extracting large quantities of data from various websites and saved as a file in your computer or to a database in a spreadsheet or other table format.

When we browse the web, most websites can only be viewed by using an internet browsing application (Internet Explorer, Firefox, Chrome, etc.) and do not offer an option to save a copy of the website’s data for private purposes. In this case, the only thing left to do is to manually copy and paste the data. As you may already know, going about this task manually is labor-intensive and can take you many hours or days to complete, depending on how much data you need to compile.

Web scraping simplifies the task for us by automating the process, so instead of sitting in front a computer all day and copy-pasting website data into a spreadsheet, Web Scraping scripts will do the task for us and complete it in only a fraction of the time. In this tutorial, we’re going to take learn the basics of automating and scraping the web using JavaScript. To get this done, we’re going to need Puppeteer, a Node library API which allows us to use headless Chrome. Headless Chrome is basically a method of running chrome without actually running it. In a nutshell, we’ll be writing JavaScript code that will run Chrome for us automatically.

Prerequisites

Before we begin, take a moment to make sure that you have the following technologies ready on your machine:

- NodeJS

- ExpressJS: infamous node framework everyone uses

- Request: makes the HTTP calls

- Cheerio: traverses the DOM and extracts data

Setup

The simple is pretty straightforward. After getting familiar with Node.js and all its intricacies, you can go ahead and include Express, Request, and Cheerio into your setup as the dependencies. The following lines of code will set up of the dependencies:

{

“name” : “node-web-scrape”,

“version” : “0.0.1”,

“description” : “Scrape le web.”,

“main” : “server.js”,

“author” : “authorsname”,

“dependencies” : {

“express” : “latest”,

“request” : “latest”,

“cheerio” : “latest”,

}

}

With the .json file ready to run, install the dependencies with:

npm install

Now let’s see what our setup will be making. In this guide, we’ll make a singular request to IMDB to get:

- Movie title

- Year of its release

- IMDB community score

After compiling this information, we’ll save it into a .json file on your computers.

Application

Our web scraping application will be very simplistic. The things it’ll do include:

- Launch web server

- Visit a website on our server which will activate the web scraper

- The web scraper will request information from the website that it wants to extract

- The request will record the HTML of the website and save it into our server

- We will traverse the DOM and take the requested information

- The extracted data will be formatted into the form we need

- Finally, the formatted information will be saved into a .json file on our computers

Now let’s set the logic in our server.js file:

var express = require(‘express’);

var fs = require(‘fs’);

var request = require(‘request’);

var cheerio = require(‘cheerio’);

var app = express();

app.get(‘/scrape’, function(req, res){

//Web scraping processes will be done here

})

app.listen(‘8081’)

console.log(‘Done on port 8081’);

exports = module.exports = app;

Requesting Information

Now that the application is ready to run, we’ll have to make the request to external URLS containing the information we want to scrape:

var express = require(‘express’);

var fs = require(‘fs’);

var request = require(‘request’);

var cheerio = require(‘cheerio);

var app = express();

app.get(‘/scrape’, function(req, res){

//The website we want to scrape info from – The Wolf of Wall Street (2013)

url = ‘http://www.imdb.com/title/tt0993846/’;

//Structure of request

//First parameter is our URL

//Callback function needs 3 parameters, an error, response status code and the html

request(url, function(error, response, html){

//Checking to make sure no errors occur

If(!error){

//Utilize cheerio library on the returned HTML

Var $ = cheerio.load(html);

//Lastly, define the variables we want to take

var title, release, rating;

var json = {title : “”, release : “”, rating : “”};

}

})

})

app.listen(‘8081’)

console.log(‘Done on port 8081’);

exports = module.exports = app;

The request function requires two parameters (URL and a callback). For the URL, we set the link of the IMDB movie. In the callback, we’ll take three parameters (error, response and html).

Traversing the DOM

First, we’ll need the movie title. Open the IMDB website for the movie, open Developer Tools and check the movie title element. We’re looking for a unique element which will assist in locating the title of the movie (header). Search for <hl class=”header”>.

var express = require(‘express’);

var fs = require(‘fs’);

var request = requite(‘request’);

var cheerio = require(‘cheerio’);

var app = express();

app.get(‘/scrape, function(req, res){

url = ‘http://www.imdb.com/title/tt0993846/’;

request(url, function(error, response, html){

if(!error){

var $ = cheerio.load(html);

var title, release, rating;

var json = { title : “”, release : “”, rating : “”};

//Using unique header as a starting pint

$(‘.header).filter(function(){

//Store filtered data into a variable

var data = $(this);

//Title rests within the first child element of header tag

//Utilize jQuery for easy navigation

title = data.children().first().text();

//After getting the title, store it to json object

json.title = title;

})

}

})

})

app.listen(‘8081’)

console.log(‘Done on port 8081’);

exports = module.exports = app;

Next we’ll need the release year of the movie. We’ll need to repeat the process, this time finding a unique element in the DOM for release year (<h1> tag).

var express = require(‘express’);

var fs = require(‘fs’);

var request = require(‘request’);

var cheerio = require(‘cheerio’);

var app = express();

app.get(‘/scrape’, fuction(req, res){

url = ‘http://www.imdb.com/title/tt0993846/’;

request(url, function(error, response, html){

if(!error){

var $ = cheerio.load(html);

var title, release, rating;

var json = { title : “”, release : “”, rating : “”};

$(‘.header’).filter(function(){

var data = $(this);

title = data.children().first().text();

//Repeat the same process but for release year

//Find exact location of release year

release = data.children().last().children().text();

json.title = title;

//Extract and save to json object

json.release = release;

})

})

})

})

app.listen(‘8081’)

console.log(‘Done on port 8081’);

exports = module.exports = app;

Finally, we repeat the process for community rating. The unique class name is .star-box-giga-star.

var express = require(‘express’);

var fs = require(‘fs’);

var request = require(‘request’);

var cheerio = require(‘cheerio’);

var app = express();

app.get(‘/scrape’, function(req, res){

url = ‘http://www.imdb.com/title/tt0993846/’;

request(url, function(error, response, html){

if(!error){

var $ = cheerior.load(html);

var title, release, rating;

var json = { title : “”, release : “”, rating : “”};

$(‘.header’).filter(function(){

var data = $(this);

title = data.children().first().text();

release = data.children().last().children().text();

json.title = title;

json.release = release;

})

//Community rating is found in a separate section of the DOM so write

new jQuery

$(‘.star-box-giga-star’).filter(function(){

var data = $(this);

//.star-box-giga-star class exactly where we wanted

//To get the rating, simply get the text

rating = data.text();

json.rating = rating;

})

)

})

})

app.listen(‘8081)

console.log(‘Done on port 8081’);

export = module.exports = app

That’s all there is to retrieving information from the website. In a nutshell, the steps mentioned above are:

- Fine unique element or attribute on the DOM to assist in singling out data

- If no unique element exists on a certain tag, then find the closest tag as your starting point

- If necessary, traverse the DOM

Formatting and Extracting

Not that we’ve successfully extracted the information, it’s time to format it to a project folder. Everything has been stored to a variable named json. If you’re unfamiliar with what the fs library is for, it gives access to our computer’s file system. The following code will write the files to the file system:

var express = require(‘express’);

var fs = require(‘fs’);

var request = require(‘request’);

var cheerio = require(‘cheerio);

var app = express();

app.get (‘/scrape’, function(req, res){

url = ‘http://www.imdb.com/title/tt0993846/’;

request(url, function(error, response, html){

if(!error){

var $ = cheerio.load(html);

var title, release, rating

var json = { title : “”, release : “”, rating : “”};

$(.header’).filter(function(){

var data = $(this);

title = data.children().first().text();

release = data.children().last().children().text();

json.title = title;

json.release = release;

})

$(‘.star-box-giga-star’).filter(function(){

var data = $(this);

rating = data.text();

json.rating = rating;

})

}

//Use default ‘fs’ library to write to the system

//Pass 3 parameters to the writeFile function

//Parameter 1: output.json – filename

//Parameter 2: JSON.stringify(json, null, 4) – data to write, JSON.stringify makes JSON easier to read

//Parameter 3: callback function – tells the status of function

fs.writeFile(‘output.json’, JSON.stringify(json, null, 4), function(err){

console.log(‘File successfully recorded. Check project directory for the output .json file’);

})

//Finally, send a message to your browser to remind you that the app has no UI

res.send(‘Check console’)

});

})

app.listen(‘8081)

console.log(‘Done on port 8081’);

exports = module.exports = app;

With the node, we are ready to scrape and store the extracted information. To start up the node server, open http://localhost:8081/scrape and see what happens.